We registered a blank domain, published 18 articles about Bali property regulations, and tracked every AI citation for 8 weeks. The site collected 202 citations across ChatGPT, Perplexity, Gemini, and Grok. It reached #1 domain rank on all four engines. It earned 136 tracked AI referral sessions with $0 in ad spend. This is the full dataset.

The site is BaliPropertyRules.com. We built it as a proof of concept to answer one question: can you engineer AI citations for a brand-new domain with no authority, no backlinks, and no advertising budget?

Last updated: April 2026.

- 202 total citations across 4 AI engines and 73 tracked prompts, audited 10 times over 6 weeks

- #1 domain rank on all four engines (ChatGPT, Perplexity, Gemini, Grok) within 8 weeks of domain registration

- Each engine has a different trust model. Gemini trusted broadly from day one. Perplexity gave us zero citations for 3 weeks, then broke through overnight

- ChatGPT's behavior correlated with Google's. A coverage decline and rebound coincided with the Google March 2026 Core Update

- 136 tracked AI referral sessions (9% of all traffic), estimated 300+ including dark traffic

What We Built

BaliPropertyRules.com is a proof-of-concept website we built from a blank domain to test whether AI citations can be engineered from zero. Domain registered in early February 2026. First content published the same week. Eighteen articles covering Bali property regulations, costs, and processes. DR11 on Ahrefs, earned organically. No link building, no paid placements, no advertising.

We measured 73 prompts across 4 AI engines (ChatGPT, Perplexity, Gemini, Grok), running 10 audits over approximately 6 weeks. The measurement window: February 12 to March 29, 2026.

The Numbers

Four engines, 202 citations, one domain rank: #1 on all four. The engines differ on every metric that matters. Gemini cited the broadest set of content. Grok cited us first more often than any other engine. Perplexity converted every retrieval into a citation. ChatGPT gave us the fewest citations but sent the most traffic.

| Engine | Coverage | Citations | Share of Voice | Win Rate | Domain Rank | Unique URLs |

|---|---|---|---|---|---|---|

| Gemini | 75.3% | 70 | 36.6% narrative | n/a | #1 | 18 (all articles) |

| Grok | 72.6% | 68 | 56% | 83% | #1 | 20 |

| Perplexity | 47.9% | 39 | 23.1% narrative | n/a | #1 | 14 |

| OpenAI | 28.8% | 25 | 36.8% | 71.4% | #1 | 11 |

Gemini cited all 18 articles. No other engine matched that breadth. Grok cited 20 unique URLs, including tool and category pages beyond the 18 articles. Perplexity retrieved and cited 14 articles, but when it retrieved content, it cited it 100% of the time. OpenAI covered 11 articles with a 71.4% win rate on those it did cite.

First citation, not just any citation

Being cited is one thing. Being cited first is another. BaliPropertyRules.com is the #1 ranked domain on all four engines. On Grok, the win rate was 83-90.5%: when Grok cited us, it cited us before every other source. On ChatGPT, 71.4%. First position gets disproportionate click-through. In our experiment, the site didn't just appear in AI responses. It led them.

How Fast Each Engine Trusted a New Domain

The four engines have fundamentally different trust models. Two trusted BaliPropertyRules.com immediately. One was selective from day one and remained cautious. One gave us nothing for three weeks, then opened wide.

| Engine | First Audit | Initial Coverage | Initial URLs Cited | Trust Behavior |

|---|---|---|---|---|

| Gemini | Feb 20 | 40.6% | 11 | Immediate broad trust |

| Grok | Feb 19 | 33.3% | 11 | Immediate broad trust, 90.5% win rate from day one |

| ChatGPT | Feb 12 | 12% | 2 | Narrow trust, only time-sensitive regulation articles |

| Perplexity | Mar 4 | 17.4% | 8 | Zero for 3 weeks (8 audits at 0.0%), then sudden breakthrough |

Gemini cited 11 URLs from its first audit. So did Grok, with a 90.5% win rate out of the gate. ChatGPT cited only 2 URLs on day one, both articles about brand-new Indonesian regulations where no quality English source existed yet.

Perplexity is the outlier. Eight consecutive audits at 0.0%. Then on March 4: 17.4% coverage, 8 unique URLs, overnight. No gradual build. Binary.

Gemini's Trajectory: 10 Audits, No Drops

Across 10 audits over approximately 6 weeks, Gemini's coverage never meaningfully dropped. One minor dip from 59.4% to 58%, within measurement noise. Every other data point went up.

All 18 articles were eventually cited by Gemini, the broadest URL coverage of any engine. Compare that to ChatGPT at 11 URLs or Perplexity at 14. Gemini also showed the most predictable growth pattern. Coverage went up. It stayed up. We did not observe a single audit where Gemini lost more than 2 percentage points from the prior measurement.

The other three engines all showed meaningful volatility during the same period. Grok swung between 22% and 73%. ChatGPT declined from 23.4% to 13% before rebounding. Perplexity sat at zero for three weeks. Gemini just kept climbing.

Three Weeks of Zero: The Perplexity Story

From February 12 to March 2, Perplexity cited BaliPropertyRules.com zero times across eight consecutive audits. Then on March 4, it cited 8 unique URLs. By March 13, coverage doubled to 31.9%. By March 29: 47.9%, #1 domain rank, and 100% retrieval-to-citation conversion observed in that audit.

For comparison: an established site with existing domain authority and years of content showed 20% Perplexity coverage from its very first audit. BaliPropertyRules.com, a brand-new domain, waited three weeks. The variable appears to be a new-domain trust threshold.

We do not know what triggered the breakthrough. We will not claim to. We see a binary pattern, not a gradient. Nothing, then something.

Post-breakthrough, Perplexity grew faster than any other engine. Three data points: 17.4% on March 4, 31.9% on March 13, 47.9% on March 29. And in the March 29 audit, every time Perplexity retrieved our content, it cited it. 100% conversion.

The Reddit cross-test

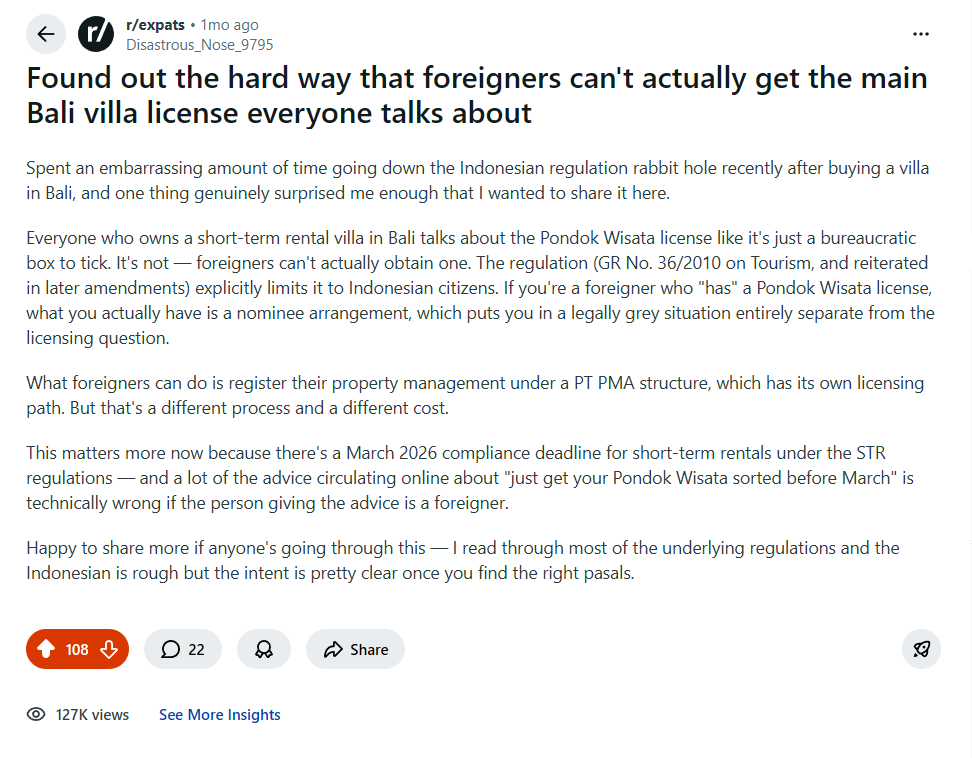

We wanted to know two things: whether the topics we chose for BPR actually resonated with humans, and how quickly high-engagement content gets retrieved by Perplexity. So we posted a finding from our Pondok Wisata licensing research on r/expats.

108 upvotes. 22 comments. 127K views. The topic worked with humans, not just algorithms. Within 24 hours of posting, Perplexity was citing the Reddit post itself in responses. Not BaliPropertyRules.com — the Reddit post. Once you are on Perplexity's radar, retrieval is fast.

But we tracked carefully: Perplexity's breakthrough on BaliPropertyRules.com 11 days later cited different articles entirely. We cannot attribute the site breakthrough to the Reddit post. Different content, different timing. What we can say is that our topic selection resonated with the audience we built the site for.

ChatGPT Cited Us for the Wrong Reasons

ChatGPT's first citations were not about content quality or domain authority. They were about information scarcity. On February 12, ChatGPT cited 2 BaliPropertyRules.com URLs. Both were articles about brand-new Indonesian regulations (UU 18/2025 and PP 28/2025) where no quality English-language source existed yet. By February 15, coverage was 14.8%, still the same 2 URLs.

Between February 20 and 27, ChatGPT slowly added articles about costs and tourism law, peaking at 23.4% coverage. Then it declined. 17.4% on March 2. 13% on March 13. We published more content during this period. Coverage went down anyway.

ChatGPT declined from 23.4% to 13% over two weeks while we were actively publishing. Then it rebounded to 28.8% on March 29, coinciding with the Google March 2026 Core Update. In our experiment, ChatGPT's citation behavior correlated with Google's indexing signals more than the market narrative suggests. One experiment, not a proven relationship. But the timing is difficult to dismiss.

For a deeper look at ChatGPT's early behavior with new domains, see how we got cited by ChatGPT in 7 days.

The implication is case-bound: ChatGPT's early citations appeared to fill information gaps where no English coverage existed. The later decline followed by a Google-update-correlated rebound suggests ChatGPT may weight Google signals more than the market narrative claims. One data point.

Grok: Volatile but Loyal

Grok had the highest win rate of any engine in our experiment: 83-90.5%. When Grok cited BaliPropertyRules.com, it cited us first. That matters for click-through. First position gets disproportionate attention.

The coverage numbers tell a different story.

Grok also cited the broadest set of URLs at 20 unique pages, including tool and category pages that other engines ignored. Every other engine cited articles only. Grok saw the full site.

At 72.6% coverage on the latest audit, Grok is second only to Gemini. But the path there was unpredictable. We could not have forecast any single audit's number from the one before it.

The Retrieval Funnel

Retrieval is not citation. An engine can find your content and choose not to cite it.

| Engine | Retrieval Rate | Conversion (retrieved to cited) | Discovery Gap (never retrieved) |

|---|---|---|---|

| OpenAI | 47.9% | 60% | 52% of prompts |

| Perplexity | 47.9% | 100% | 52% of prompts |

| Grok | 72.6% | 100% | 27% of prompts |

Gemini does not expose retrieval data in the same way, so it is excluded from this table.

OpenAI and Perplexity retrieved content at the same rate: 47.9%. But Perplexity cited every piece of content it retrieved. OpenAI cited 60% of what it retrieved. Same retrieval, different conversion. Grok retrieved more often (72.6%) and also converted at 100%.

Over half of all prompts on OpenAI and Perplexity never triggered retrieval of our content at all. The discovery gap is a different problem from the conversion gap, and requires different strategies to solve.

Someone Copied Our Article. ChatGPT Recommended Them.

On February 23, bali-property-real-estate.com copy-pasted our short-term rental compliance article verbatim. Within 24 hours, the scraper was earning ChatGPT citations for queries BaliPropertyRules.com should have owned. The scraper's advantage: older domain, higher authority, faster crawl cycle.

As of March 29: BaliPropertyRules.com has 25 OpenAI citations and #1 domain rank. The scraper has 8 OpenAI citations at #8 rank. The scraper does not appear on any other engine.

Three of four engines correctly attributed the content to the original author. ChatGPT was the only engine where the copy gained traction. We do not know whether ChatGPT detected the duplicate and ranked the original higher, or whether BaliPropertyRules.com simply outgrew the scraper through subsequent content. The result is clear: 25 to 8. But the scraper still appears.

The other three engines never cited the scraper. Not once across all audits. Original content authorship appears to be an active signal on three engines and absent (or weaker) on the fourth.

Request your free AI Visibility Report →

AI Sent Us 136 Tracked Visitors. We Spent $0.

AI engines drove 136 tracked referral sessions, 9% of all traffic to BaliPropertyRules.com. The actual number is likely 300+ when accounting for dark traffic: AI-assisted searches that appear as direct visits in analytics.

| Source | Sessions | Users |

|---|---|---|

| Direct | 807 | 663 |

| 448 | 293 | |

| chatgpt.com | 118 | 75 |

| Bing | 30 | 22 |

| gemini.google.com | 11 | 7 |

| copilot.com | 4 | 4 |

| claude.ai | 3 | 2 |

The engine that cites you most does not necessarily send the most traffic. The engagement was real: our villa licensing guide averaged 7:29 session duration. Want to know if AI is already sending traffic to your competitors? Run a free instant check.

Search performance over the same period: 388 clicks, 80,414 impressions, average position 5.1 in Google Search Console. All 20 pages earning impressions. Growth from 0 impressions on February 5 to 3,159 per day on March 29.

Five Observations From 10 Audits

These are case-bound observations from one experiment, not universal engine laws. We frame them as "we observed," because that is what the data supports.

1. Each engine has its own trust model

Gemini cited 11 URLs from its first audit. Perplexity gave us nothing for 3 weeks, then opened the gate overnight. ChatGPT picked up only two articles where no English source existed yet. Grok trusted us immediately but swung 50 percentage points between audits. Four engines. Four trust architectures. No single optimization covers all of them.

2. Coverage and traffic are different things

Gemini: 75.3% coverage. Eleven tracked sessions. ChatGPT: 28.8% coverage. A hundred and eighteen sessions. Read that again. The engine that cites you most sends the least traffic. We break down why in what makes AI engines choose one source over another.

3. Only one engine rewarded the scraper

A site copied our article word for word. Three engines ignored the copy entirely. ChatGPT cited it. Still does. Original authorship is an active signal on Perplexity, Gemini, and Grok. On ChatGPT, domain authority appears to carry more weight than who wrote it first.

4. Perplexity's trust is binary

Not a gradient. Not a slow build. Zero citations across 8 audits, then 8 URLs overnight. We do not know what triggered it. We are not going to guess.

5. ChatGPT tracked Google more than we expected

23.4% coverage, then a two-week decline to 13%. Then 28.8% on the same audit window as Google's March 2026 Core Update. Correlation. One experiment. But the timing is hard to explain away.

What This Means If You Want AI to Cite Your Business

AI citations are measurable. Not abstract. We tracked 73 prompts across 4 engines, ran 10 audits, and quantified every citation and referral session. This is a performance channel with real numbers.

A blank domain hit #1 on all four engines in 8 weeks. DR11. No link building. No ad spend. Content strategy, technical optimization, and off-site brand building were the variables. But each engine responded to different signals. A single approach does not work across all four.

The sequencing, the per-engine optimization, the weekly iteration based on audit data. That is what we do for clients.

Request your free AI Visibility Report to see where AI engines mention your competitors and where they are silent about you. Or run a free instant AI visibility check right now.

The bottom line

A blank domain with 18 articles reached #1 on ChatGPT, Perplexity, Gemini, and Grok in 8 weeks. 202 citations. 136 tracked AI referral sessions. $0 in advertising. The data is above. The methodology behind it is what we do for clients.

Frequently Asked Questions

How long does it take to get cited by AI engines?

In our experiment, Gemini and Grok cited the site within the first week. ChatGPT cited specific content within 5 days but only for queries where no quality English source existed. Perplexity took 3 weeks of zero citations before a sudden breakthrough. The timeline depends on the engine.

Can a new website get cited by ChatGPT, Perplexity, Gemini, and Grok?

Yes. BaliPropertyRules.com went from a blank domain to #1 on all four in 8 weeks with 18 articles and zero ad spend.

Do AI engines use Google rankings to decide what to cite?

It varies by engine. ChatGPT showed a coverage decline and rebound that coincided with a Google Core Update. Perplexity cited nothing for three weeks despite early Google impressions. Gemini grew steadily regardless of SERP position changes. We observed correlation in one engine, not causation.

How much traffic do AI engines send?

In 8 weeks, BaliPropertyRules.com received 136 tracked AI referral sessions (9% of all traffic) from chatgpt.com, gemini.google.com, copilot.com, and claude.ai. The actual number is likely 300+ when accounting for dark traffic: AI-assisted searches that appear as direct visits in analytics.

Sources

- Cited internal citation audit data, BaliPropertyRules.com, 10 audits from Feb 12 to Mar 29, 2026. 73 prompts across ChatGPT, Perplexity, Gemini, Grok.

- Google Search Console data, BaliPropertyRules.com, 90-day overview ending Mar 29, 2026.

- Google Analytics 4 data, BaliPropertyRules.com, session-level traffic sources.

- Ahrefs domain rating, BaliPropertyRules.com, as of Mar 29, 2026.

By

By